As enterprises accelerate generative AI adoption, one strategic question consistently arises:

Should we fine-tune our large language model (LLM) or rely on prompt engineering?

Both approaches improve AI performance — but they differ significantly in cost, scalability, operational complexity, and governance impact.

This guide breaks down LLM fine-tuning vs prompt engineering from an enterprise decision-making perspective.

What is Prompt Engineering?

Prompt engineering is the practice of designing structured inputs that guide a pre-trained Large Language Model (LLM) to produce high-quality outputs—without modifying the model’s internal weights.

Instead of retraining the model, you refine how instructions are written. Effective prompt engineering focuses on instruction clarity, context framing, role prompting, few-shot examples, output formatting control, and chain-of-thought reasoning.

For example:

A basic prompt such as “Write a product description” produces generic results.

An optimized prompt such as “You are a SaaS marketing expert. Write a persuasive product description for a cloud-based cybersecurity platform targeting mid-sized enterprises. Include three key benefits and a strong CTA” generates more structured and relevant output.

No retraining is required—only better input design. This makes prompt engineering fast, flexible, and cost-efficient.

What is LLM Fine-Tuning?

Fine-tuning involves retraining a pre-trained LLM on domain-specific data so that it adapts its internal parameters to better understand specialized language, workflows, and behavioral patterns.

Unlike prompt engineering, fine-tuning changes how the model “thinks,” not just how it interprets instructions.

The process typically includes curating proprietary datasets, running GPU-based training cycles, validating and testing performance, versioning models, and conducting ongoing retraining to prevent drift.

Fine-tuning produces a highly specialized model tailored to enterprise requirements—but it requires significant infrastructure, cost investment, and operational maturity.

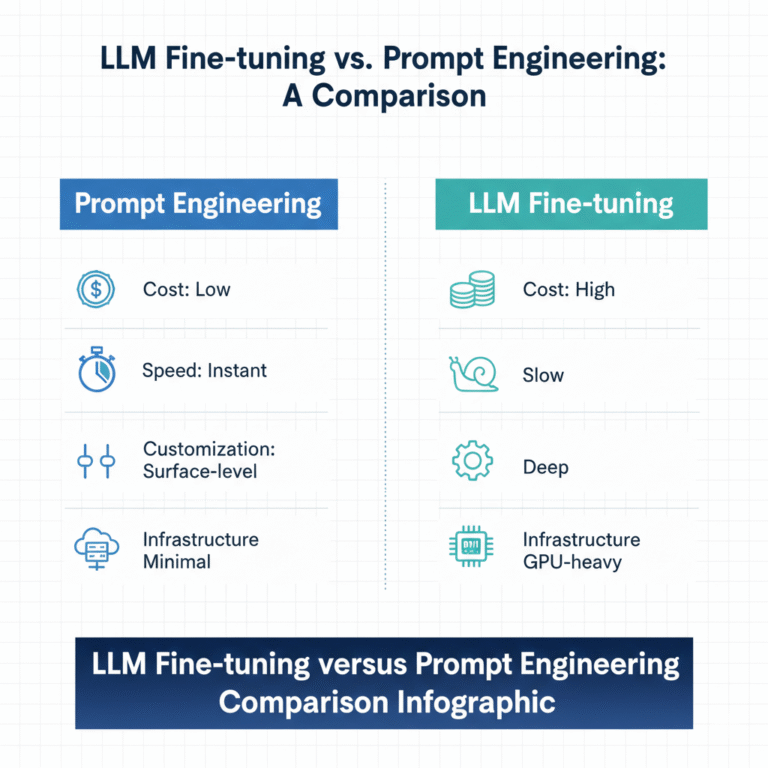

Core Differences: Fine-Tuning vs Prompt Engineering

Criteria | Prompt Engineering | Fine-Tuning |

Cost | Low | High |

Deployment Speed | Immediate | Weeks |

Infrastructure | Minimal | Requires ML stack |

Customization Depth | Moderate | High |

Maintenance | Simple | Continuous retraining |

Scalability | High | Moderate |

Governance Complexity | Moderate | High |

Prompt engineering emphasizes agility and experimentation. It offers immediate deployment, minimal infrastructure requirements, lower cost, and high scalability across multiple use cases.

Fine-tuning emphasizes specialization and control. It requires dedicated ML infrastructure, longer deployment timelines, higher upfront and operational cost, and continuous maintenance. However, it delivers deeper domain adaptation and tighter output consistency.

In short, prompting optimizes interaction. Fine-tuning optimizes internal model behavior.

When to Choose Prompt Engineering?

Prompt engineering is ideal when enterprises need rapid deployment, budget efficiency, flexibility across diverse workflows, and minimal infrastructure investment. It works especially well when combined with Retrieval-Augmented Generation (RAG), which grounds model outputs in real-time enterprise data.

Common enterprise applications include AI chatbots, marketing content generation, internal knowledge assistants, and sales copilots. In many scenarios, prompt engineering combined with RAG delivers strong performance without the expense of fine-tuning.

For early-stage or mid-scale AI deployments, prompting often provides the best balance of speed and value.

When to Choose Fine-Tuning?

Fine-tuning becomes valuable when domain language is highly specialized, output consistency must be tightly controlled, compliance demands deterministic behavior, or proprietary workflows require deeper adaptation.

Industries such as legal services, healthcare, finance, and insurance often benefit from fine-tuned models in critical decision-support systems.

However, organizations must account for ongoing retraining, monitoring, version control, and governance processes. Fine-tuned models are not “set and forget” assets—they require disciplined lifecycle management.

Cost Considerations for Enterprises

From a financial standpoint, prompt engineering has lower upfront and operational costs. Expenses are typically tied to API usage and limited engineering effort.

Fine-tuning introduces additional costs related to data preparation, labeling, GPU compute resources, storage infrastructure, deployment pipelines, monitoring systems, and continuous retraining. Over time, this increases total cost of ownership significantly compared to prompt optimization alone.

Enterprises must evaluate whether the incremental performance gain justifies the operational overhead.

Risk & Governance Considerations

Prompt engineering carries risks such as prompt injection attacks and context limitations, but it avoids the complexity of managing custom-trained models.

Fine-tuning introduces additional risks, including overfitting, potential data leakage, compliance exposure, and model drift. It requires mature governance frameworks, audit mechanisms, and structured MLOps practices.

For regulated industries, governance maturity often determines whether fine-tuning is viable.

The Hybrid Enterprise Approach

Most advanced enterprises adopt a hybrid strategy. They begin with prompt engineering for rapid experimentation, integrate RAG to ground outputs in enterprise knowledge, and selectively apply fine-tuning to high-impact, high-risk workflows.

This approach balances cost efficiency with domain specialization while minimizing operational risk.

Strategic Decision Framework

Before choosing, enterprises should evaluate:

- Do we require real-time data access?

- How specialized is our domain language?

- What is our AI budget and infrastructure maturity?

- Do we need strict output consistency?

- Can we support ongoing model maintenance?

Answering these questions clarifies whether prompt optimization is sufficient or fine-tuning is justified.

Before choosing an approach, enterprises should evaluate whether they require real-time data grounding, how specialized their domain language is, their available AI budget and infrastructure maturity, whether strict output consistency is necessary, and whether they can support long-term model maintenance.

These questions clarify whether prompt optimization is sufficient—or if fine-tuning is strategically justified

Conclusion

There is no universal winner between prompt engineering and fine-tuning. Prompt engineering delivers speed, flexibility, and cost efficiency, making it ideal for most early and mid-stage enterprise AI deployments.

Fine-tuning provides deeper domain specialization and tighter control—but demands higher investment and operational maturity.

For many organizations, the optimal path is sequential: start with prompting, enhance with retrieval, and fine-tune only where strategic value warrants the added complexity.

Explore our AI/ML services below

- Connect us – https://internetsoft.com/

- Call or Whatsapp us – +1 305-735-9875

ABOUT THE AUTHOR

Abhishek Bhosale

COO, Internet Soft

Abhishek is a dynamic Chief Operations Officer with a proven track record of optimizing business processes and driving operational excellence. With a passion for strategic planning and a keen eye for efficiency, Abhishek has successfully led teams to deliver exceptional results in AI, ML, core Banking and Blockchain projects. His expertise lies in streamlining operations and fostering innovation for sustainable growth