Artificial intelligence becomes a core component of enterprise operations, organizations must ensure that AI systems are deployed responsibly, transparently, and in compliance with regulations. AI models can influence critical decisions in areas such as finance, healthcare, supply chain operations, and customer engagement. Without proper oversight, these systems may introduce risks related to bias, security, privacy, and accountability.

AI governance frameworks provide structured guidelines that help organizations manage the development, deployment, and monitoring of artificial intelligence systems. These frameworks define policies, processes, and controls that ensure AI technologies operate in a safe, ethical, and compliant manner.

By implementing a strong governance structure, organizations can balance innovation with risk management while building trust with stakeholders. In this blog, we explore what AI governance frameworks are, why they are important, how they work, and how enterprises can implement them effectively.

What Is an AI Governance Framework?

An AI governance framework is a structured system of policies, standards, and operational processes that guide how artificial intelligence systems are designed, deployed, and managed within an organization.

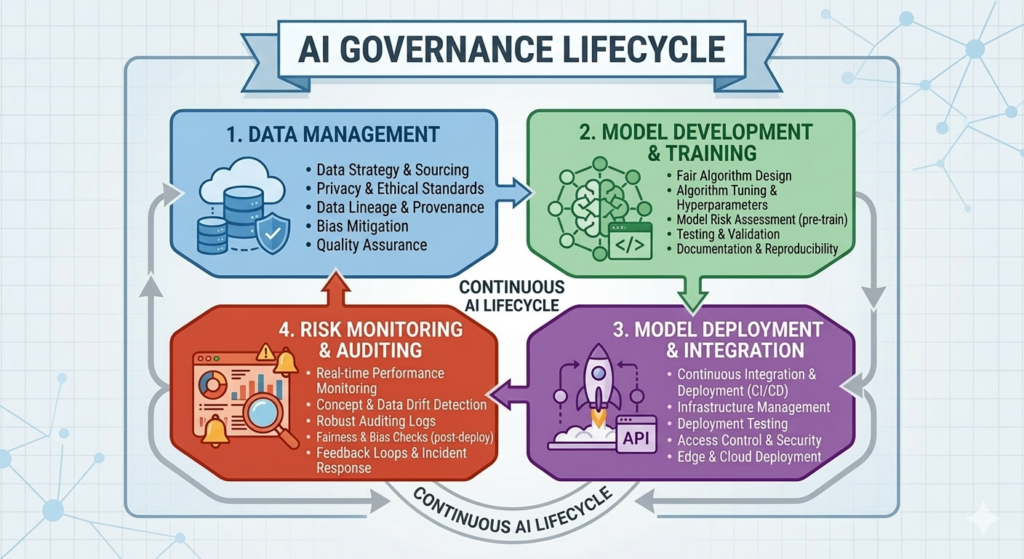

The purpose of AI governance is to ensure that AI technologies align with organizational values, regulatory requirements, and ethical standards. Governance frameworks establish clear rules for how data is used, how models are developed, how decisions are monitored, and who is accountable for AI outcomes.

These frameworks typically involve cross-functional collaboration between technical teams, business leaders, legal experts, and compliance officers. Together, they create policies that manage the lifecycle of AI systems, from data collection and model training to deployment and ongoing monitoring.

A well-defined governance framework ensures that AI initiatives deliver value while minimizing risks related to bias, misuse, or regulatory violations.

Why AI Governance Is Important?

As organizations increasingly rely on AI-driven systems, governance becomes essential for maintaining transparency, accountability, and regulatory compliance.

One major reason for AI governance is risk management. AI models can produce unintended consequences if they are trained on biased data or deployed without proper oversight. Governance frameworks help organizations identify and mitigate these risks before they affect business operations or customer outcomes.

Another key benefit is regulatory compliance. Governments and regulatory bodies are introducing policies that require organizations to demonstrate responsible use of AI technologies. Governance frameworks help ensure that AI systems meet these regulatory standards.

AI governance also promotes trust and transparency. When organizations clearly document how AI systems operate and monitor their performance, stakeholders are more confident in the technology.

Additionally, governance frameworks support scalability. As AI adoption grows within an organization, governance structures ensure consistent standards across multiple teams and projects.

Key Components of an AI Governance Framework

An effective AI governance framework consists of several interconnected components that guide the entire lifecycle of AI systems.

Policy and Ethical Guidelines

Organizations must establish policies that define acceptable uses of AI technologies. These policies outline ethical principles such as fairness, transparency, accountability, and privacy protection.

Ethical guidelines ensure that AI systems align with both legal requirements and organizational values.

Data Governance

AI systems rely heavily on data, making data governance a critical component of AI oversight. Data governance policies ensure that datasets are collected, stored, and used responsibly.

This includes managing data quality, protecting sensitive information, and ensuring compliance with privacy regulations.

Model Development Standards

Governance frameworks should define clear standards for how AI models are developed and validated. These standards help ensure that models are accurate, unbiased, and aligned with business objectives.

Documentation of model design, training data sources, and evaluation metrics improves transparency and accountability.

Monitoring and Risk Management

Once AI systems are deployed, continuous monitoring is essential to ensure that models perform as expected. Monitoring processes track performance metrics, detect anomalies, and identify potential biases or operational issues.

Risk management strategies help organizations respond quickly if models produce inaccurate or harmful outcomes.

Accountability and Oversight

Clear accountability structures ensure that specific individuals or teams are responsible for AI decisions and outcomes. Governance frameworks often include review boards or committees that oversee high-impact AI applications.

This oversight helps organizations maintain control over AI systems and address issues proactively.

How AI Governance Frameworks Work in Practice?

In real-world environments, AI governance frameworks guide decision-making throughout the AI lifecycle.

During the initial planning phase, organizations evaluate potential risks associated with AI projects and determine whether the proposed system aligns with governance policies. Risk assessments help identify ethical, operational, or regulatory concerns before development begins.

During model development, governance standards ensure that data sources are validated, biases are assessed, and model performance is properly evaluated. Documentation processes capture important details about how models are built and trained.

Once AI systems are deployed, monitoring tools continuously track model behavior and performance. If anomalies or unexpected outcomes occur, governance procedures define how teams should investigate and resolve the issue.

Regular audits and reviews ensure that AI systems remain compliant with evolving regulations and organizational policies.

Common AI Governance Frameworks Used by Organizations

Several global organizations and regulatory bodies have developed governance frameworks to guide responsible AI adoption.

The NIST AI Risk Management Framework provides guidelines for identifying, managing, and reducing risks associated with AI systems. It emphasizes trustworthiness, transparency, and accountability.

The OECD AI Principles promote responsible AI practices that prioritize fairness, transparency, and human-centered values.

The EU AI Act framework introduces regulatory requirements for AI systems based on risk categories, particularly focusing on high-risk applications.

Large technology companies also develop internal governance frameworks to ensure responsible development and deployment of AI systems within their organizations.

These frameworks help establish industry standards for safe and ethical AI usage.

Best Practices for Implementing AI Governance

Organizations implementing AI governance should start by establishing a cross-functional governance team. This team should include representatives from technical, legal, compliance, and business departments to ensure balanced oversight.

Clear documentation is another critical practice. Maintaining detailed records of data sources, model development processes, and decision logic improves transparency and simplifies audits.

Organizations should also integrate governance processes into existing AI development workflows rather than treating them as separate compliance tasks. This ensures that governance becomes a natural part of AI operations. Continuous monitoring and periodic audits help organizations detect emerging risks and maintain long-term system reliability.

Finally, employee training programs can help teams understand responsible AI practices and governance policies, ensuring consistent implementation across the organization.

Challenges in AI Governance Implementation

Implementing AI governance frameworks can be complex, particularly in large organizations with multiple AI initiatives.

One challenge involves balancing innovation with regulation. Excessive governance controls may slow down AI experimentation, while insufficient oversight may increase risk exposure.

Another challenge is managing evolving regulations. Governments and international organizations continue to develop new policies related to AI ethics, privacy, and security.

Data management also presents challenges. Maintaining high-quality datasets while ensuring compliance with privacy regulations requires strong data engineering and governance practices.

Finally, scaling governance frameworks across global organizations requires standardized processes that remain flexible enough to accommodate different regulatory environments. Addressing these challenges requires a strategic governance approach that combines strong policies with practical implementation processes.

Conclusion

AI governance frameworks play a critical role in ensuring that artificial intelligence systems are deployed responsibly, ethically, and in compliance with regulatory standards. By establishing clear policies, accountability structures, and monitoring processes, organizations can manage the risks associated with AI while maximizing its benefits.

A well-designed governance framework supports transparency, improves trust, and enables organizations to scale AI initiatives safely across business operations. As AI technologies continue to evolve, governance frameworks will remain essential for balancing innovation with responsibility.

Organizations that proactively implement AI governance structures will be better positioned to leverage artificial intelligence while maintaining ethical standards, regulatory compliance, and long-term business sustainability.

Explore our AI/ML services below

- Connect us – https://internetsoft.com/

- Call or Whatsapp us – +1 305-735-9875

ABOUT THE AUTHOR

Abhishek Bhosale

COO, Internet Soft

Abhishek is a dynamic Chief Operations Officer with a proven track record of optimizing business processes and driving operational excellence. With a passion for strategic planning and a keen eye for efficiency, Abhishek has successfully led teams to deliver exceptional results in AI, ML, core Banking and Blockchain projects. His expertise lies in streamlining operations and fostering innovation for sustainable growth