Artificial Intelligence is no longer experimental—it’s operational. From AI-powered SaaS platforms to enterprise copilots, companies are investing heavily in AI to drive efficiency and growth. But as AI adoption scales, costs can quickly spiral out of control.

In this blog, we’ll break down practical AI cost optimization strategies that software development companies and enterprises can implement to reduce AI spend without compromising performance or innovation.

Why AI Costs Escalate Faster Than Expected?

AI systems differ from traditional software in one critical way—they continuously consume computational resources. While traditional applications may scale predictably with users, AI systems require ongoing compute for training, inference, monitoring, and retraining. As adoption grows, so does infrastructure usage, often faster than anticipated.

Common cost drivers include high GPU/TPU usage for training and inference, over-provisioned cloud environments, inefficient or oversized model architectures, uncontrolled API calls and token consumption, and redundant or poorly designed data pipelines. Without proactive governance, AI initiatives can quickly shift from strategic investments to financial burdens.

1. Right-Size AI Infrastructure from Day One

A common mistake in AI projects is over-engineering infrastructure early. Teams often provision large GPU clusters or high-end instances before validating workload requirements, leading to unnecessary spend.

A more sustainable approach involves starting with smaller instance types and scaling incrementally as usage grows. Autoscaling should be enabled for inference workloads to match demand dynamically. Development, testing, and production environments must be isolated to avoid accidental resource overlap. Regular audits of idle or underutilized compute resources help eliminate waste.

Cloud providers such as Amazon Web Services, Microsoft Azure, and Google Cloud Platform offer detailed cost dashboards—but cost visibility only adds value when reviewed consistently.

2. Optimize Model Selection (Bigger Is Not Always Better)

Large models often provide incremental performance gains at exponentially higher costs. Training and serving oversized models can significantly inflate infrastructure and inference expenses.

Cost-efficient strategies include using distilled or fine-tuned models instead of training from scratch, selecting task-specific models over general-purpose LLMs, applying quantization and pruning techniques to reduce model size, and caching frequent inference results to avoid repeated computation.

In many enterprise scenarios, optimized mid-sized models deliver most of the required business value at a fraction of the operational cost.

3. Control Inference and API Usage

For customer-facing applications such as copilots, chatbots, and recommendation systems, inference becomes the largest recurring expense. Every request triggers compute consumption, and uncontrolled usage scales costs rapidly.

Organizations can reduce inference costs by implementing request batching, setting token and rate limits, using edge inference where feasible, introducing caching for repeated queries, and monitoring AI consumption per user or feature. Granular visibility into usage patterns enables more precise cost governance.

Inference optimization is essential for maintaining predictable margins in AI-driven products.

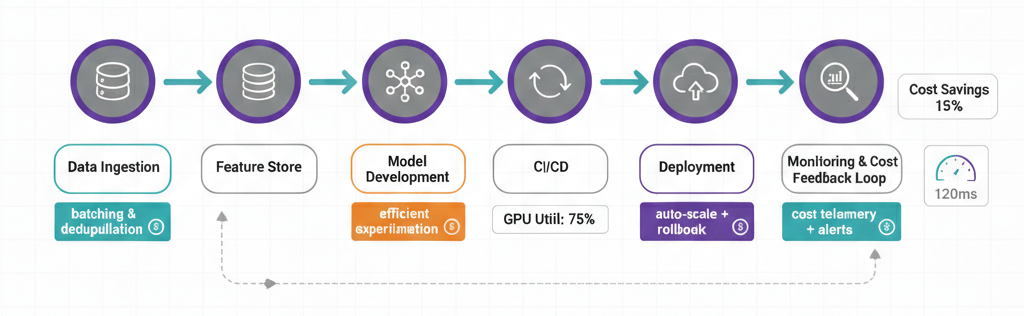

4. Adopt Cost-Aware MLOps Practices

MLOps is not only about automation and faster deployment—it must also incorporate financial discipline. Cost-aware MLOps integrates cost monitoring directly into the AI lifecycle.

This includes automated cost alerts, benchmarking models based on both performance and cost, scheduling retraining instead of running it continuously, and decommissioning underperforming or redundant models. Each model should be evaluated not just for accuracy but for return on compute investment.

Treating AI models as financial assets ensures they remain aligned with business value.

5. Optimize Data Pipelines and Storage

Inefficient data pipelines are a hidden source of AI cost escalation. Storing duplicate, outdated, or irrelevant data increases both storage and compute requirements during training.

Effective data strategies include eliminating low-quality or redundant datasets, implementing tiered storage (hot, warm, cold), compressing and archiving historical data, and training models only on relevant feature subsets. Clean and well-structured data reduces training time, accelerates experimentation, and lowers overall cloud expenditure.

6. Leverage Hybrid and Multi-Cloud Strategically

Not every AI workload needs to run entirely in one cloud environment. A hybrid or multi-cloud strategy can improve cost efficiency and negotiating leverage.

On-premise inference may be suitable for predictable, high-volume workloads, while cloud-based training supports burst compute needs. Distributing workloads across providers also reduces vendor lock-in and allows organizations to optimize pricing models.

A strategic infrastructure mix can improve both cost predictability and operational flexibility.

Conclusion

AI cost optimization is not about cutting innovation—it is about designing sustainable, scalable systems. By right-sizing infrastructure, selecting efficient models, controlling inference usage, optimizing data pipelines, and embedding cost governance into MLOps, organizations can significantly reduce AI expenditure while maintaining performance.

Enterprises that treat AI cost management as a strategic capability—not an afterthought—gain long-term competitive advantage and build financially resilient AI ecosystems.

Want to build cost-efficient, production-ready AI solutions?

- Connect us – https://internetsoft.com/

- Call or Whatsapp us – +1 305-735-9875

In the end

Choosing the right AI/ML solutions in 2026 depends on your business objectives, data maturity, scalability needs, and the complexity of problems you aim to solve. Whether your focus is predictive analytics, intelligent automation, computer vision, natural language processing, or generative AI, the AI/ML approaches and technologies available today offer flexible, powerful ways to drive innovation and measurable business outcomes. As AI continues to evolve rapidly, these solutions are becoming more adaptive, explainable, and production-ready—enabling organizations to build smarter, faster, and more resilient systems.

As a leading software development company in California, Internet Soft is committed to delivering high-impact AI and machine learning solutions that help businesses stay competitive in an AI-first world. From startups exploring AI adoption to enterprises scaling advanced ML models, Internet Soft provides end-to-end AI services—from strategy and data engineering to model development, deployment, and optimization.

By leveraging the expertise of Internet Soft, a trusted AI/ML development partner, you can be confident that your solutions are built using the latest AI technologies and best practices. Our focus on performance, scalability, and real-world usability ensures that your AI initiatives deliver intelligent experiences, operational efficiency, and sustainable business growth.

ABOUT THE AUTHOR

Abhishek Bhosale

COO, Internet Soft

Abhishek is a dynamic Chief Operations Officer with a proven track record of optimizing business processes and driving operational excellence. With a passion for strategic planning and a keen eye for efficiency, Abhishek has successfully led teams to deliver exceptional results in AI, ML, core Banking and Blockchain projects. His expertise lies in streamlining operations and fostering innovation for sustainable growth