Artificial intelligence systems are increasingly influencing important decisions across industries such as healthcare, finance, manufacturing, and government. While these systems provide powerful predictive capabilities, many advanced machine learning models operate as “black boxes,” making it difficult for humans to understand how they reach specific conclusions.

Explainable AI (XAI) addresses this challenge by making AI systems more transparent and interpretable. Instead of simply providing predictions, XAI helps organizations understand why a model produced a particular outcome. This transparency improves trust, enables regulatory compliance, and supports better human oversight.

As organizations deploy AI in critical workflows, explainability is becoming an essential requirement rather than an optional feature. In this blog, we explore what Explainable AI is, why it matters, how it works, and how organizations can implement it effectively.

What Is Explainable AI?

Explainable AI refers to a set of methods and techniques that make machine learning models more understandable to humans. The goal of XAI is to ensure that AI systems can clearly communicate how they arrive at specific predictions or decisions.

Traditional machine learning models such as decision trees or linear regression are relatively easy to interpret because their decision logic is transparent. However, modern models like deep neural networks and complex ensemble algorithms can contain millions of parameters, making their decision processes difficult to interpret.

Explainable AI introduces techniques that allow stakeholders to examine the reasoning behind model outputs. These techniques help answer questions such as which input features influenced the decision, how different variables interact, and how confident the model is in its prediction.

By providing visibility into model behavior, XAI helps organizations ensure that AI systems are reliable, fair, and aligned with business objectives.

Why Explainable AI Is Important?

As AI adoption grows, organizations must ensure that automated decisions remain transparent and accountable. Explainability helps bridge the gap between advanced machine learning systems and human understanding.

One of the primary benefits of Explainable AI is building trust. When users can see why an AI model made a decision, they are more likely to trust and adopt the technology. This is particularly important in industries where AI systems directly impact human lives. Explainability also supports regulatory compliance. Many industries must comply with regulations that require transparency in automated decision-making processes. Regulations such as data protection laws and industry-specific standards increasingly emphasize the importance of explainable systems.

Another key advantage is model debugging and improvement. Understanding how a model makes decisions allows data scientists to identify errors, biases, or incorrect assumptions within the model.

Explainable AI also promotes fairness and ethical AI practices by identifying potential biases that could negatively impact certain groups or individuals.

How Explainable AI Works

Explainable AI techniques help interpret complex models by analyzing their internal behavior or approximating their decision-making logic.

Model Transparency

Some models are inherently interpretable. Models such as linear regression, logistic regression, and decision trees allow users to easily understand the relationship between input variables and predicted outcomes.

However, these models may not always achieve the same predictive accuracy as more complex algorithms.

Post-Hoc Explanation Methods

For complex models like neural networks or gradient boosting algorithms, explainability is often achieved using post-hoc interpretation techniques. These methods analyze the model after it has been trained to identify which features influenced predictions.

Techniques such as feature importance analysis help determine which variables have the greatest impact on model outcomes.

Local and Global Explanations

Explainability methods can operate at different levels.

Local explanations focus on explaining individual predictions. For example, they help answer why a model rejected a specific loan application.Global explanations analyze the model’s overall behavior across the entire dataset, helping organizations understand broader decision patterns and relationships between variables.

Both levels of explanation are valuable for building trustworthy AI systems.

Common Techniques Used in Explainable AI

Several widely used techniques help interpret complex machine learning models.

Feature importance methods identify which input variables contribute most significantly to predictions. These insights help organizations understand what factors drive model outcomes.

LIME (Local Interpretable Model-Agnostic Explanations) is a technique that explains individual predictions by approximating the complex model with a simpler interpretable model around a specific data point.

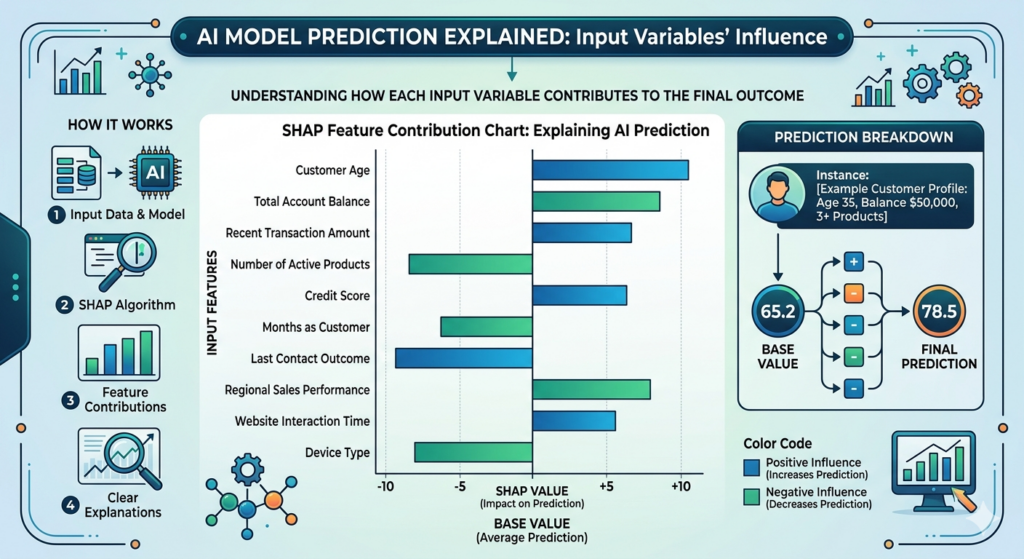

SHAP (Shapley Additive Explanations) is another powerful method that measures the contribution of each feature to a prediction based on cooperative game theory principles. SHAP values provide consistent and detailed explanations of model behavior.

Partial dependence plots and sensitivity analysis are also used to visualize how changes in input variables affect model predictions. These techniques help transform complex models into systems that humans can understand and evaluate.

Business Applications of Explainable AI

Explainable AI is particularly important in industries where decisions must be transparent, auditable, and accountable.

- In financial services, explainable models help justify credit decisions, detect fraud, and comply with regulatory requirements. Banks must often explain why a loan application was approved or rejected.Healthcare organizations use explainable AI to support clinical decision-making.

- Medical professionals need to understand why an AI system recommends a specific diagnosis or treatment.

- Insurance companies rely on explainable models for risk assessment and claims processing, ensuring that automated decisions remain fair and transparent.

- In manufacturing, explainable AI helps engineers understand predictive maintenance models and identify the factors contributing to equipment failure.

- Across industries, explainability ensures that AI systems support human decision-making rather than replacing it without accountability.

Best Practices for Implementing Explainable AI

Organizations should adopt a structured approach when integrating explainability into their AI systems.

One important practice is selecting appropriate models based on the required level of transparency. In some scenarios, simpler interpretable models may be preferred over highly complex ones.It is also essential to incorporate explainability during the model development phase rather than adding it later as an afterthought. Designing models with interpretability in mind improves long-term reliability.

Organizations should also document model decisions and maintain audit trails. This documentation helps stakeholders review how models behave and ensures accountability in automated systems.Continuous monitoring is another critical practice. As data evolves over time, organizations must ensure that models remain accurate, unbiased, and explainable.

Finally, collaboration between data scientists, business teams, and compliance experts helps ensure that explainability aligns with both technical and regulatory requirements.

Challenges in Explainable AI

Despite its importance, implementing Explainable AI presents several challenges.

- One challenge is balancing accuracy and interpretability. Highly complex models often provide better predictive performance but are harder to explain.

- Another challenge involves translating technical explanations into insights that non-technical stakeholders can understand. Effective explainability requires communication methods that are accessible to business leaders and end users.

- Explainability techniques may also increase computational complexity, especially when analyzing large models or datasets.

- Finally, organizations must ensure that explanations themselves are reliable and not misleading. Poorly designed explanation systems may oversimplify model behavior or create incorrect interpretations.

- Addressing these challenges requires careful model design, robust evaluation frameworks, and strong collaboration between technical and business teams.

Conclusion

Explainable AI plays a vital role in making artificial intelligence systems transparent, trustworthy, and accountable. As AI becomes more deeply integrated into enterprise decision-making processes, organizations must ensure that automated models can explain their reasoning clearly.

By adopting explainability techniques, businesses can improve trust in AI systems, meet regulatory requirements, detect potential biases, and enhance model performance. Transparent AI systems enable better collaboration between humans and machines, allowing organizations to fully leverage the benefits of artificial intelligence.

Explore our AI/ML services below

- Connect us – https://internetsoft.com/

- Call or Whatsapp us – +1 305-735-9875

ABOUT THE AUTHOR

Abhishek Bhosale

COO, Internet Soft

Abhishek is a dynamic Chief Operations Officer with a proven track record of optimizing business processes and driving operational excellence. With a passion for strategic planning and a keen eye for efficiency, Abhishek has successfully led teams to deliver exceptional results in AI, ML, core Banking and Blockchain projects. His expertise lies in streamlining operations and fostering innovation for sustainable growth